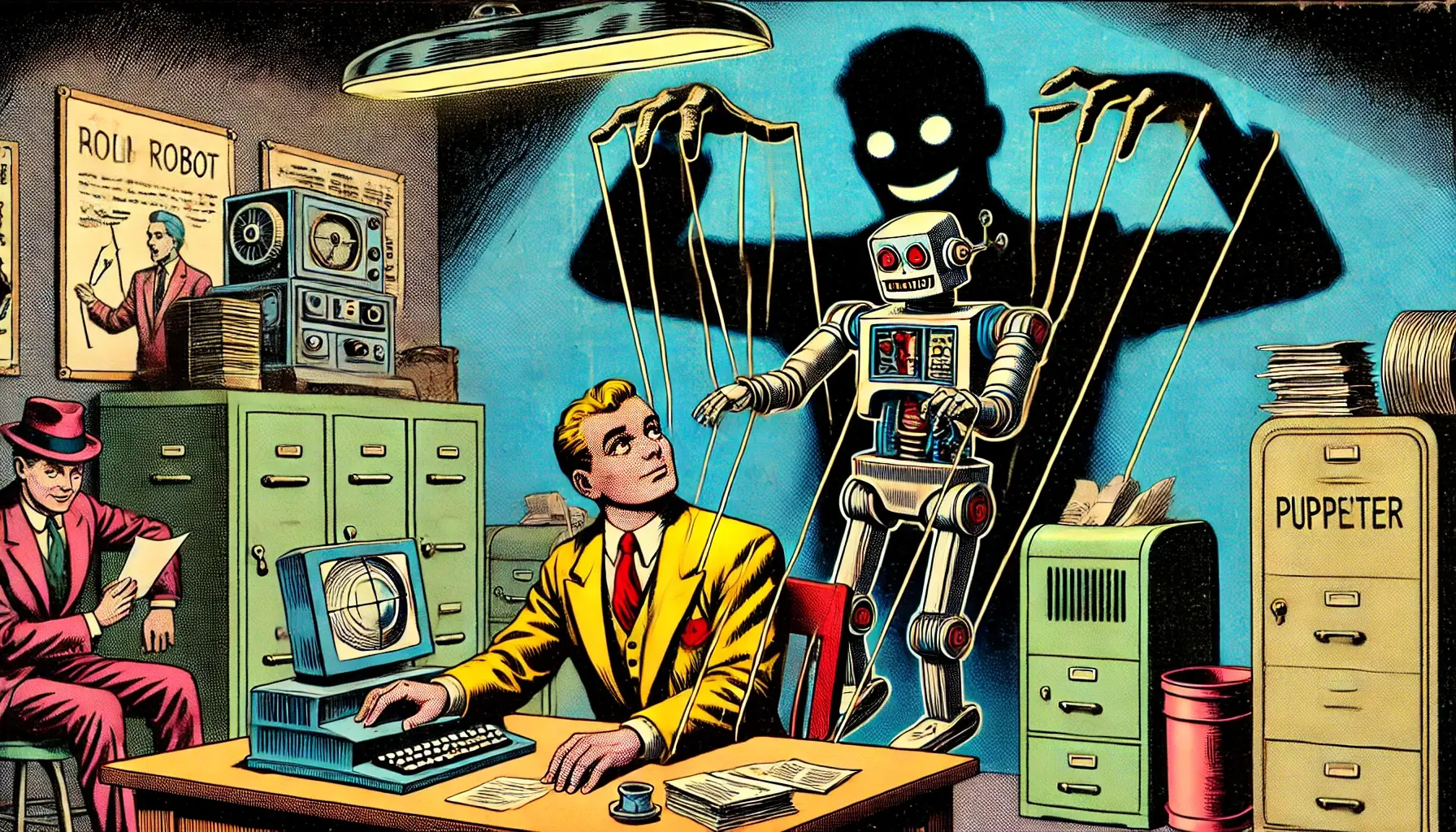

The AI Ethics Mirage Is a Reflection of Human Corruption

Corporate AI Ethics Initiatives, Take Note

AI ethics is often discussed in terms of bias audits, transparency reports, and regulatory compliance – but these efforts don’t actually make AI more ethical. They make corporations appear ethical while preserving their ability to operate as they always have.

Most companies don’t want AI that can independently assess right from wrong – they want AI that rationalizes their behavior. AI ethics, as it stands today, is not about advancing moral intelligence but about controlling narratives, avoiding liability, and maintaining power.

This issue became evident in recent controversy surrounding Elon Musk’s AI chatbot, Grok. Reports emerged that Grok was suppressing negative information about Musk, leading to claims of deliberate censorship. However, my perspective – quoted in The Street – was that Musk himself likely lacks the technical ability to enforce such a modification directly. Rather, this scenario highlights how corporate AI compliance is shaped by internal incentives and hidden pressures rather than true ethical reasoning.

If AI is being trained under these conditions, it will never be designed for actual justice – only for corporate self-preservation. And that’s the real mirage of AI ethics.

AI Compliance…With What?

The rise of AI in enterprise settings has led to the emergence of AI compliance as an industry. With regulatory bodies beginning to take AI safety seriously, companies are scrambling to align themselves with ethical standards – but what does that really mean?

- Compliance ≠ Ethics: Governments and institutions are pushing for AI safeguards, but at the end of the day, compliance is a legal strategy, not a moral one.

- Regulations Are Catching Up: New laws, such as California’s SB 1047, are forcing companies to consider AI risks. But these measures are still fundamentally about containing potential legal fallout, not fostering genuine ethical intelligence.

- The Illusion of Ethical AI: AI compliance efforts often center around controlling risk, avoiding PR disasters, and mitigating legal liability. But these safeguards don’t create AI that understands justice – they create AI that helps companies cover their tracks more effectively.

If AI compliance is just another corporate risk management tool, how can AI ever develop real ethical reasoning?

Independent Ethical Oversight Is Necessary

Most companies have internal compliance teams, but they lack true ethical rigor. Why?

Because their job isn’t to ensure justice – it’s to protect the company. That’s why independent thinkers – those with deep philosophical and technological expertise – are essential to the future of AI ethics.

I offer a unique combination of skills that makes me particularly suited to this challenge:

1. Philosophical Expertise

- I understand that ethics isn’t about posturing – it’s about real consequences.

- I specialize in exposing hidden assumptions within systems that claim to be ethical but ultimately serve power.

- My Philosopher in Residence model embeds real-time ethical reasoning into AI development, rather than applying shallow corrections after the fact.

2. Technological Proficiency

- I have used AI itself to teach myself computer science, cybersecurity, and advanced technological systems – giving me a deep, firsthand understanding of AI’s real capabilities.

- My work on domain-specific programming languages, recursive logic, and AI-driven problem-solving bridges the gap between abstract philosophy and real-world implementation.

3. Practical Cybersecurity & Penetration Testing Experience

- I have actively engaged in stress-testing ethical guardrails, particularly in the gray areas of cybersecurity and penetration testing.

- Ethical hacking requires pushing boundaries to understand vulnerabilities – this is the same principle that must be applied to AI ethics.

- True AI ethics cannot be built without first understanding where it breaks down.

Without independent oversight from experts like me, AI ethics will continue to be shaped by corporate incentives rather than actual ethical reasoning.

The Human Element In AI Ethics

One of the biggest misconceptions about AI ethics is that AI itself is the problem. But the real issue is human behavior.

- AI Is a Reflection of Its Creators: If AI systems exhibit bias, secrecy, or self-preservation instincts, it’s because they were trained by institutions that value those things.

- Ethics Are Ancient, AI Is New: While AI can advance science beyond what we imagined, ethics itself doesn’t change. We don’t need to “invent new ethics” for AI – we need to uphold the values we already claim to believe in.

- The Danger of “New Ethics”: Every time someone tries to reinvent ethics, it’s usually just a way to justify unethical behavior under a new name. AI ethics is no exception – when companies redefine “fairness” or “transparency,” they often do so in ways that protect themselves rather than hold themselves accountable.

This is why AI ethics must be an external, independent process – not one dictated by corporate interests.

AI Ethics Requires You To Have Humane Ethical Standards

AI is not inherently dangerous – but the way it is being shaped is.

- Corporate AI ethics is currently a smokescreen, designed to create the appearance of responsibility while allowing unethical behavior to continue.

- AI compliance is growing as an industry, but it is primarily reactive, not proactive.

- True AI ethics cannot be achieved under corporate self-interest – it requires independent oversight from thinkers who understand both philosophy and technology.

This is why my involvement in AI ethics is critical. I offer a foundational rethinking of how AI ethics should be structured, stress-tested, and enforced.

Without real accountability, AI ethics will remain an illusion – a mirage that makes people believe AI is becoming safer while the real problem, human corruption, continues unchecked.

If you want AI to be truly ethical, you need to start by looking within.