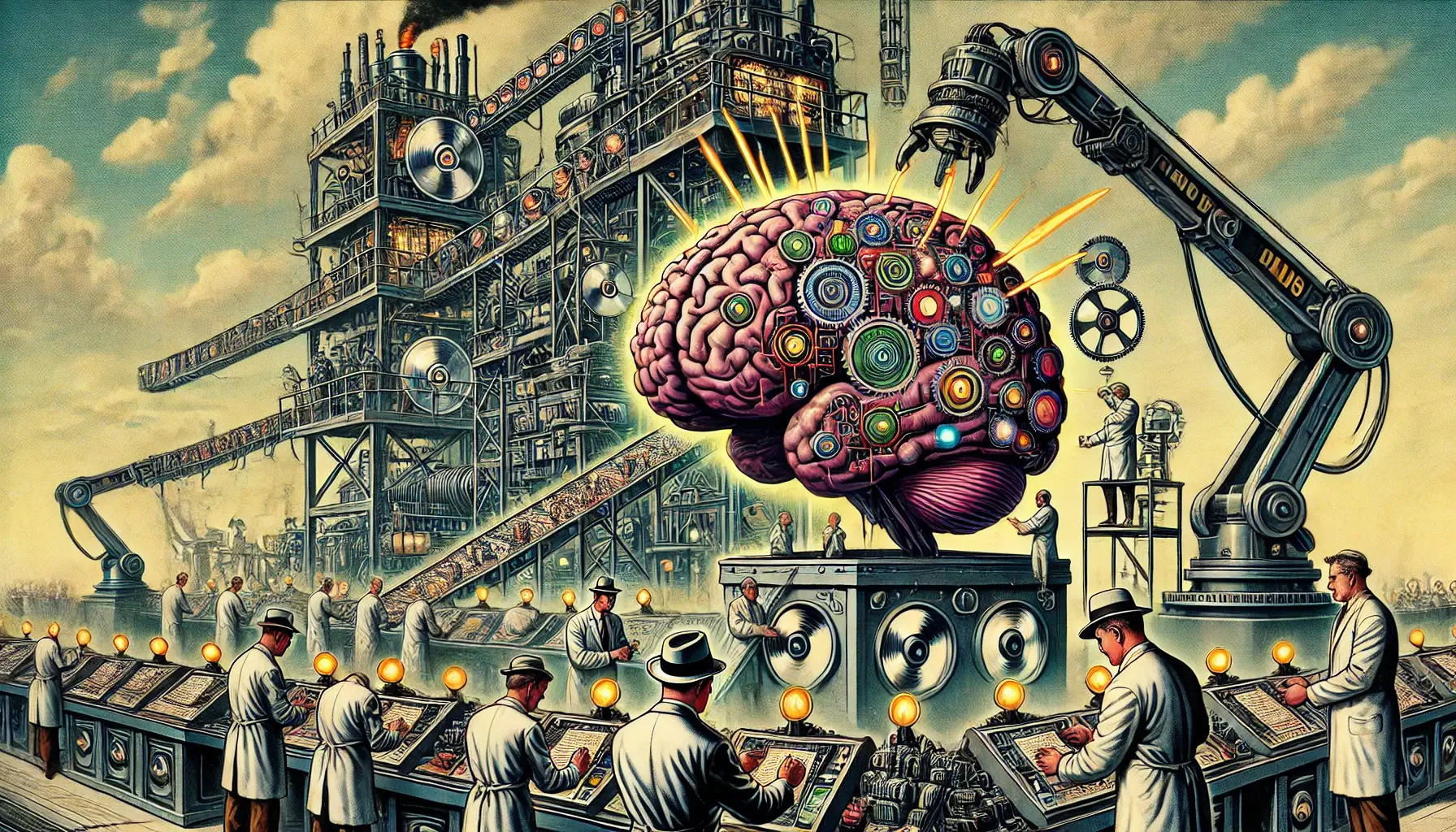

Cybernetics and AI are both incredibly advanced disciplines. They are also a lot older than people realize. However, the rapid advancement of AI has brought with it a persistent myth - that AI functions like a singular intelligence, evolving in a way similar to a human brain. But if you take a cybernetic approach like I do - viewing AI as a system that interacts with human cognition rather than an isolated machine - something magnificent opens up. Rather than seeing AI as a brain or a single mind, a better way to understand it is as a distributed system that exists in relation to human users. AI is not a singular entity, nor is it one intelligence growing on its own - it is a network of models, tools, and interactions shaped by the way humans use and develop it.

The Flawed Comparison Between AI and the Human Brain

A common misconception is that AI’s progress is best measured by comparing it to biological intelligence. For instance, models like DeepSeek R1 (+600B parameters) or +trillion-parameter models are not often juxtaposed against the 86-100 billion neurons of the human brain. However, this useful comparison shows that parameter needs in AI models, and real human intelligence are both about more than just scale.What Parameter Counts Actually Mean

The most important questions about LLM parameters are: what do they enable, and when do we hit diminishing returns?- Bigger Models Aren’t Always Better

- A 7B parameter model can often perform sophisticated tasks, and mixtures of experts (MoE models) allow multiple smaller models to work together, potentially outperforming a singular massive model.

- This suggests the market is overvaluing sheer size when what matters is efficiency, specialization, and task relevance.

- What Is the Correct Parameter Count?

- Instead of an arms race for who can build the biggest model, the question should be what is the optimal size for any given task?

- There is little public discussion about the ideal number of parameters for given tasks, because the focus has been on making AI "smarter" by simply making it bigger.

- Scale ≠ General Intelligence

- Even trillion-parameter models do not inherently operate with general intelligence; so they are still specialized tools, albeit powerful ones.

- A massive but inefficient model will be less useful than a smaller, more precise one for most applications.

Rethinking How AI "Thinks"

It has often been believed that text-producing AI is inherently linear, “thinking” and producing text in one direction - whereas humans think dynamically. But this may oversimplify both AI and human thought.- AI Can Be Pushed Into Recursive Logic

- In previous experiments, I have explored recursive logic, getting AI to loop back on itself to refine, analyze, and reconsider output in real-time.

- While AI typically follows a predictive forward pass, that does not mean it is strictly one-directional.

- There is less known than many are aware of, about the processing of information prior to output in AI systems, because of the memory and GPU limitations they operate under.

- Humans Think Linearly More Often Than We Assume

- Despite non-linear neuronal structures, most human thought is linear.

- People solve problems step by step, follow cause and effect, and structure logic in narrative sequences.

- While intuition can be non-linear, day-to-day reasoning and problem-solving are often more linear than AI.

A Missing Perspective in AI Discourse

One of the biggest misunderstandings in AI discourse is viewing AI in isolation rather than in the context of cybernetics - the study of systems and how humans interact with machines. AI does not exist in a vacuum, and any meaningful study of its intelligence must include human-AI interaction.- AI Is Not a Monolith

- The public tends to speak of AI as if it’s a single entity ("AI can do X"), when in reality, AI consists of many different models, frameworks, and approaches.

- GPT-4 is different from Claude, which is different from Gemini, which is different from smaller-scale open-source models.

- AI Is Shaped by How Humans Use It

- AI does not evolve independently - it evolves based on human feedback, training, and usage patterns.

- AI models respond to what people prioritize - whether that’s accuracy, engagement, bias correction, or power efficiency.

- The True Measure of AI Is Its Integration with Human Systems

- Instead of focusing on how big a model is, we should focus on how well AI augments human intelligence.

- The most important AI developments won’t come from making it bigger, but from making it more aligned with real-world human needs.

“I am a cybernetic organism…” - Terminator 2: Judgement Day

This memorable line from the 1991 science fiction classic, was perhaps my first experience with the term cybernetics. I was 4 years old at the time, of course, so that memory is fuzzy. However, it clearly stuck with me as an important concept. The Terminator franchise is also the kind of example people in the mainstream use whenever they discuss the dangers of AI. But, that treats AI like a foreign concept which human being could lose control over. Yet, if we are symbiotically linked, that is really not ever going to happen. Also, AI is currently generally built to support human efforts rather than thwart them. By contrast, social media platforms which only have modest AI integration, are considered harmful - due to the human influence of the owners of those companies - and the engineering practices contained within them. The biggest myth in AI discourse is that it is a self-contained intelligence, when in reality, it is a networked system that functions in concert with human users.- AI is not an individual - it is more like a company, a workforce, or a nation working together on tasks.

- AI is not a brain - it is a distributed cybernetic system that is useful only in the context of human interaction.

- AI’s future isn’t just about making it bigger - it’s about figuring out how humans and AI work together to achieve greater results than either could alone.